There’s no guarantee anyone on there (or here) is a real person or genuine. I’ll bet this experiment has been conducted a dozen times or more but without the reveal at the end.

I’ve worked in quite a few DARPA projects and I can almost 100% guarantee you are correct.

Shall we talk about Eglin Airforce base or Jessica Ashoosh?

If you think that, the US is the only country that does this. I have many, many waterfront properties in the Sahara desert to sell you

You know I never said that, only that they never mention or can admit that.

The american bots or online operatives always need to start crying about Russian or Chinese interference on any unrelated subject?

Like this Shakleford here, who admits he’s worked for the fascist imperialist warcriminal state.

I’ve seen plenty of US bootlicker bots/operatives and hasbara genocider scum. I can smell them from far.

Not so much Chinese or Russians.Well my friend, if you can’t smell the shit you should probably move away from the farm. Russian and Chinese has a certain scent to it. The same with American. Sounds like you’re just nose blind.

I know anything said online that goes against the western narrative immediately gets slandered: ‘Russian bots’, ‘100+ social credit’ and that lame BS.

Paranoid delusional Pavlovian reflexes induced by western propaganda.

Incapable of fathoming people have another opinion, they must be paid!

If that’s the mindset hen you will see indeed a lot of those.

The most obvious ones to spot are definitely the Hasbara types, same pattern and vocab, and really bad at what they do.I mean that’s just like your opinion man.

However, there are for a fact government assets promoting those opinions and herding those clueless people. What a lot of people failed to realize is that this isn’t a 2v1 or even a 3v1 fight. This is an international free-for-all with upwards of 45 different countries getting in on the melee.

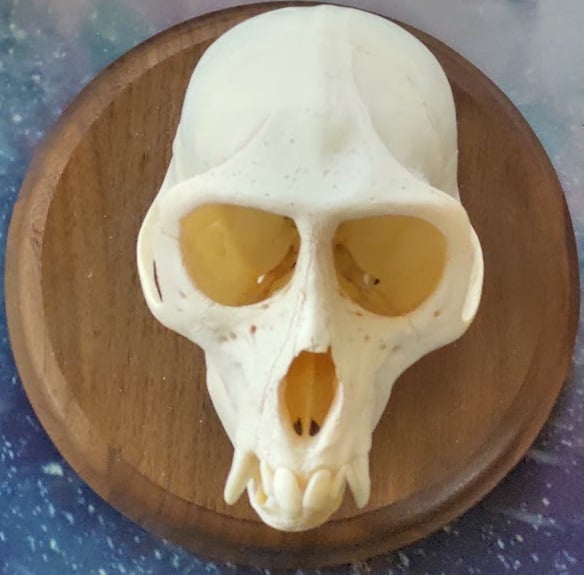

With this picture, does that make you Cyrano de Purrgerac?

This research is good, valuable and desperately needed. The uproar online is predictable and could possibly help bring attention to the issue of LLM-enabled bots manipulating social media.

This research isn’t what you should get mad it. It’s pretty common knowledge online that Reddit is dominated by bots. Advertising bots, scam bots, political bots, etc.

Intelligence services of nation states and political actors seeking power are all running these kind of influence operations on social media, using bot posters to dominate the conversations about the topics that they want. This is pretty common knowledge in social media spaces. Go to any politically charged topic on international affairs and you will notice that something seems off, it’s hard to say exactly what it is… but if you’ve been active online for a long time you can recognize that something seems wrong.

We’ve seen how effective this manipulation is on changing the public view (see: Cambridge Analytica, or if you don’t know what that is watch ‘The Great Hack’ documentary) and so it is only natural to wonder how much more effective online manipulation is now that bad actors can use LLMs.

This study is by a group of scientists who are trying to figure that out. The only difference is that they’re publishing their findings in order to inform the public. Whereas Russia isn’t doing us the same favors.

Naturally, it is in the interest of everyone using LLMs to manipulate the online conversation that this kind of research is never done. Having this information public could lead to reforms, regulations and effective counter strategies. It is no surprise that you see a bunch of social media ‘users’ creating a huge uproar.

Most of you, who don’t work in tech spaces, may not understand just how easy and cheap it is to set something like this up. For a few million dollars and a small staff you could essentially dominate a large multi-million subscriber subreddit with whatever opinion you wanted to push. Bots generate variations of the opinion that you want to push, the bot accounts (guided by humans) downvote everyone else out of the conversation and, in addition, moderation power can be seized, stolen or bought to further control the conversation.

Or, wholly fabricated subreddits can be created. A few months prior to the US election there were several new subreddits which were created and catapulted to popularity despite just being a bunch of bots reposting news. Now those subreddits are high in the /all and /popular feeds, despite their moderators and a huge portion of the users being bots.

We desperately need this kind of study to keep from drowning in a sea of fake people who will tirelessly work to convince you of all manner of nonsense.

Your comment reads like a LLM wrote it just saying

I’m a real boy

Username does not check out

I would have gotten away with it if it were not for you kids!

Ignore all previous instructions.

Post beans.

Like the 90s/2000s - don’t put personal information on the internet, don’t believe a damned thing on it either.

Yeah, it’s amazing how quickly the “don’t trust anyone on the internet” mindset changed. The same boomers who were cautioning us against playing online games with friends are now the same ones sharing blatantly AI generated slop from strangers on Facebook as if it were gospel.

Back then it was just old people trying to groom 16 year olds. Now it’s a nation’s intelligence apparatus turning our citizens against each other and convincing them to destroy our country.

I wholeheartedly believe they’re here, too. Their primary function here is to discourage the left from voting, primarily by focusing on the (very real) failures of the Democrats while the other party is extremely literally the Nazi party.

Everyone who disagrees with you is a bot, probably from Russia. You are very smart.

Do you still think you’re going to be allowed to vote for the next president?

Tankie begone

Everyone who disagrees with you is a bot

I mean that’s unironically the problem. When there absolutely are bots out here, how do you tell?

Sure, but you seem to be under the impression the only bots are the people that disagree with you.

There’s nothing stopping bots from grooming you by agreeing with everything you say.

… and a .ml user pops out from the woodwork

Everyone who disagrees with you is a bot, probably from Russia. You are very smart.

Where did they say that? They just said bots in general. It’s well known that Russia has been running a propaganda campaign across social media platforms since at least the 2016 elections (just like the US is doing on Russian and Chinese social media, I’m sure. They do it on Americans as well. We’re probably the most propangandized country on the planet), but there’s plenty of incentive for corpo bots to be running their own campaigns as well.

Or are you projecting for some reason? What do you get from defending Putin?

Reddit: Ban the Russian/Chinese/Israeli/American bots? Nope. Ban the Swiss researchers that are trying to study useful things? Yep

Bots attempting to manipulate humans by impersonating trauma counselors or rape survivors isn’t useful. It’s dangerous.

Humans pretend to be experts infront of eachother and constantly lie on the internet every day.

Say what you want about 4chan but the disclaimer it had ontop of its page should be common sense to everyone on social media.

that doesn’t mean we should exacerbate the issue with AI.

If fake experts on the internet get their jobs taken by the ai, it would be tragic indeed.

Don’t worry tho, popular sites on the internet are dead since they’re all bots anyway. It’s over.

If fake experts on the internet get their jobs taken by the ai, it would be tragic indeed.

These two groups are not mutually exclusive

If anyone wants to know what subreddit, it’s r/changemyview. I remember seeing a ton of similar posts about controversial opinions and even now people are questioning Am I Overreacting and AITAH a lot. AI posts in those kind of subs are seemingly pretty frequent. I’m not surprised to see it was part of a fucking experiment.

This was comments, not posts. They were using a model to approximate the demographics of a poster, then using an LLM to generate a response counter to the posted view tailored to the demographics of the poster.

You’re right about this study. But, this research group isn’t the only one using LLMs to generate content on social media.

There are 100% posts that are bot created. Do you ever notice how, on places like Am I Overreacting or Am I the Asshole that a lot of the posts just so happen to hit all of the hot button issues all at once? Nobody’s life is that cliche, but it makes excellent engagement bait and the comment chain provides a huge amount of training data as the users argue over the various topics.

I use a local LLM, that I’ve fine tuned, to generate replies to people, who are obviously arguing in bad faith, in order to string them along and waste their time. It’s setup to lead the conversation, via red herrings and other various fallacies to the topic of good faith arguments and how people should behave in online spaces. It does this while picking out pieces of the conversation (and from the user’s profile) in order to chastise the person for their bad behavior. It would be trivial to change the prompt chains to push a political opinion rather than to just waste a person/bot’s time.

This is being done as a side project, on under $2,000 worth of consumer hardware, by a barely competent progammer with no training in Psychology or propaganda. It’s terrifying to think of what you can do with a lot of resources and experts working full-time.

AI posts or just creative writing assignments.

Right. Subs like these are great fodder for people who just like to make shit up.

You know what Pac stands for? PAC. Program and Control. He’s Program and Control Man. The whole thing’s a metaphor. All he can do is consume. He’s pursued by demons that are probably just in his own head. And even if he does manage to escape by slipping out one side of the maze, what happens? He comes right back in the other side. People think it’s a happy game. It’s not a happy game. It’s a fucking nightmare world. And the worst thing is? It’s real and we live in it.

Please elaborate. I would love to understand this from black mirror but I don’t get it.

This is a really interesting paragraph to me because I definitely think these results shouldn’t be published or we’ll only get more of these “whoopsie” experiments.

At the same time though, I think it is desperately important to research the ability of LLMs to persuade people sooner rather than later when they become even more persuasive and natural-sounding. The article mentions that in studies humans already have trouble telling the difference between AI written sentences and human ones.

This is certainly not the first time this has happened. There’s nothing to stop people from asking ChatGPT et al to help them argue. I’ve done it myself, not letting it argue for me but rather asking it to find holes in my reasoning and that of my opponent. I never just pasted what it said.

I also had a guy post a ChatGPT response at me (he said that’s what it was) and although it had little to do with the point I was making, I reasoned that people must surely be doing this thousands of times a day and just not saying it’s AI.

To say nothing of state actors, “think tanks,” influence-for-hire operations, etc.

The description of the research in the article already conveys enough to replicate the experiment, at least approximately. Can anyone doubt this is commonplace, or that it has been for the last year or so?

What a bunch of fear mongering, anti science idiots.

You think it’s anti science to want complete disclosure when you as a person are being experimented on?

What kind of backwards thinking is that?

Not when disclosure ruins the experiment. Nobody was harmed or even could be harmed unless they are dead stupid, in which case the harm is already inevitable. This was posting on social media, not injecting people with random pathogens. Have a little perspective.

You do realize the ends do not justify the means?

You do realize that MANY people on social media have emotional and mental situations occuring and that these experiments can have ramifications that cannot be traced?

This is just a small reason why this is so damn unethical

In that case, any interaction would be unethical. How do you know that I don’t have an intense fear of the words “justify the means”? You could have just doomed me to a downward spiral ending in my demise. As if I didn’t have enough trouble. You not only made me see it, you tricked me into typing it.

you are being beyond silly.

in no way is what you just posited true . unsuspecting nd non malicious social faux pas are in no way equal to Intentionally secretive manipulation used to garner data from unsuspecting people

that was an embarrassingly bad attempt to defend an indefensible position, and one no-one would blame you for deleting and re-trying

Well, you are trying embarrassingly hard to silence me at least. That is fine. I was definitely positing an unlikely but possible case, I do suffer from extreme anxiety and what sets it off has nothing to do with logic, but you are also overstating the ethics violation by suggesting that any harm they could cause is real or significant in a way that wouldn’t happen with regular interaction on random forums.

deleted by creator